Why PromptFluent Built an AI Business Prompt Library

(And Why That Matters for Enterprise AI Execution) Artificial intelligence is not failing in business because the models are weak.

18 min read

Stephanie Unterweger : Apr 28, 2026 1:19:53 PM

Let me tell you a story about how the company I love the most in AI quietly changed the rules on me.

I am, by any reasonable definition, an Anthropic superfan. I have been a willing, happy $200-per-month Claude subscriber since the day it became available. I have regularly and cheerfully paid overage fees when I blew through my monthly tokens. I have paid additional fees for API batch processing without blinking. My typical monthly spend with Anthropic hovered comfortably under $350, and I considered it the best money I spent on anything in my professional life.

I am the person who, in December 2025, tweeted from my company's account: "Now that @claudeai has resurrected itself, can we admit Opus 4.5 is basically a tiny wizard doing spells for you? The other agents were neat, but #Opus might actually make everyday people with basic logic skills rich. Meanwhile, I'm still Googling 'how to boil eggs.'"

I meant every word. I still do.

So when Anthropic recently alerted me that I'd run through my overage credits and needed to top them off, I didn't panic. I added $250, figuring that would comfortably cover me for weeks since I didn't have any large batch processes planned.

Two hours later, I checked my Claude Console.

$131 spent in the last 24 hours.

Half of my credits — gone — in less than a day.

I hadn't run any massive batch jobs. I hadn't done anything I'd consider extraordinary by my own standards. I had linked my OpenClaw agent to my API and started developing some new Claude agents. These agents hadn't done anything revolutionary. They hadn't accomplished anything more complex than work I'd done with Claude dozens of times before, for very small fees.

And that was the moment I stopped being a cheerful superfan and started being an investigative one.

I sat down. I pulled up the pricing documentation. I went through the models, the token rates, the inputs, the outputs, the multipliers, the surcharges, the fine print. And what I found was surprising and, frankly, disappointing — from a platform I have loved and extolled to everyone I know.

Here's what I discovered — and what every business using Claude in 2026 needs to understand.

If you only read the top line, Anthropic's 2026 pricing story sounds like pure good news. The company's flagship Opus model has dropped from $15/$75 per million input/output tokens (the Opus 4 and 4.1 era) to $5/$25 per million tokens with Opus 4.6 and the newly released Opus 4.7. That's a 67% reduction at the flagship tier. Sonnet has held steady at $3/$15 per million tokens across four generations. The 1-million-token context window, which used to carry a significant surcharge, is now included at standard pricing for both Opus 4.6 and Sonnet 4.6.

On paper, this is a genuinely impressive value proposition.

But underneath those headlines, a series of structural changes — some announced quietly, some buried in technical documentation, and some not announced at all — are creating a pricing landscape where the actual cost of using Claude depends less on which model you choose and more on how you use it, what tools you connect, and whether you're paying attention to the fine print.

Here are the seven hidden costs that businesses aren't seeing until the invoice arrives.

The most straightforward hidden cost is also the most avoidable — in theory. Anthropic's previous-generation flagship models, Claude Opus 4 and Opus 4.1, are priced at $15 per million input tokens and $75 per million output tokens. The current-generation Opus 4.6 (and now Opus 4.7) costs $5/$25. That means anyone still running on the older models is paying three times more for objectively inferior performance.

So why would anyone stay?

Because model migration is not a version upgrade. It's a regression testing project.

Organizations that have built production systems on Opus 4 or 4.1 — particularly in regulated industries like healthcare, financial services, and legal tech — have invested significant time tuning prompts, validating outputs, and documenting expected behaviors against a specific model's characteristics. Switching to a newer model doesn't just mean changing a model ID in an API call. It means re-validating every prompt in the portfolio, re-testing every output against quality standards, and re-certifying compliance in environments where "it works the same" isn't sufficient — you have to prove it works the same.

This is exactly the problem that prompt engineering governance platforms are designed to solve. When your prompt portfolio is unmanaged — no systematic audit trail, no testing framework, no version control — every model migration becomes a high-risk, high-effort project that gets deprioritized behind revenue-generating work. Meanwhile, the "legacy model tax" keeps compounding month after month.

What this costs you: A team processing 10 million input tokens and 4 million output tokens per month on Opus 4.1 pays $150 in input costs and $300 in output costs — $450 per month. The same workload on Opus 4.6 costs $50 in input and $100 in output — $150 per month. That is $300 per month, or $3,600 per year, in pure overspend for the same work done by a less capable model.

This is the pricing story that nobody is talking about, and it is the one that should concern high-volume production teams the most.

Claude 3 Haiku, which launched in 2024, cost $0.25 per million input tokens and $1.25 per million output tokens. It was one-twelfth the price of Sonnet and became the default choice for classification, routing, extraction, moderation, and any high-throughput workload where speed and cost mattered more than deep reasoning.

Then came Haiku 3.5, at $0.80/$4 per million tokens — more than three times the cost of the original Haiku.

And now Haiku 4.5 sits at $1/$5 per million tokens. That's a 4x price increase from Claude 3 Haiku to Haiku 4.5 at the input level, and a 4x increase on output as well.

To be fair, the capability improvements are real. Haiku 4.5 performs close to where Sonnet 4 was just months earlier, which is genuinely impressive. But the economic positioning has fundamentally shifted. What was once a model that cost one-twelfth of Sonnet is now one that costs one-third of Sonnet. For organizations running millions of simple API calls per month — chatbots, customer service triage, content classification, data extraction — this quiet price creep at the budget tier has a compounding impact that dwarfs any savings from the Opus price reduction.

And here's the kicker: Claude 3 Haiku, the cheapest model Anthropic ever offered, was deprecated on April 19, 2026. The floor just got a lot higher.

What this costs you: An organization running 50 million tokens of Haiku input per month at classification tasks saw their monthly input cost go from $12.50 (Claude 3 Haiku) to $50 (Haiku 4.5). That's $450 per year in additional costs on what was supposed to be the budget option — and that's input costs alone. Add output tokens and you're looking at a 4x total increase on your most cost-sensitive workloads.

This one is the most elegant hidden cost I've seen from any AI provider, because it is technically not a price increase at all.

When Anthropic released Opus 4.7 on April 16, 2026, the headline was reassuring: same pricing as Opus 4.6 — $5/$25 per million tokens. No price hike.

But buried in the release notes is a detail that changes the math entirely: Opus 4.7 uses a new tokenizer that can produce up to 35% more tokens for the same input text.

Let that sink in. The price per token hasn't changed. But the number of tokens it takes to represent your content has increased. Your prompts haven't gotten longer. Your documents haven't grown. But the meter is now running faster.

The impact varies by content type. Standard English prose might see a modest increase. Code, structured data (JSON, XML), and non-English text get hit hardest — potentially approaching that 35% ceiling. Anthropic frames this as a tradeoff for the model's improved accuracy and instruction-following, which is fair. But the financial implication is real: a request that cost $0.10 on Opus 4.6 could cost anywhere from $0.10 to $0.135 on Opus 4.7, depending on content mix.

And because output tokens are priced at 5x the input rate ($25 vs. $5 per million), the tokenizer change hits hardest on the output side — where it costs the most.

What this costs you: An organization spending $5,000/month on Opus 4.6 that migrates to Opus 4.7 without adjusting workflows could see bills increase by $500 to $1,750 per month — with zero change in usage patterns or output volume. Over a year, that is $6,000 to $21,000 in additional spend that never showed up in a pricing announcement.

In April 2026, Anthropic restructured its enterprise plan so that the seat fee no longer bundles any usage allowance. Every token is now billed at standard API rates on top of the base $20 per seat per month.

Organizations on older seat-based plans with fixed usage allowances must migrate by their next contract renewal, or they lose their grandfathered terms.

This shift from bundled to metered billing is the most structurally significant pricing change Anthropic has made. Under the old model, enterprise customers could predict their Claude costs with reasonable accuracy: X seats times Y dollars per seat, with a known usage ceiling. Under the new model, costs scale with consumption — and in an era of agentic AI, where autonomous agents can burn through tokens at rates no human user would approach, consumption is dramatically harder to predict and control.

As one AI consultancy CEO noted publicly, some enterprise clients were already spending far beyond what their base seat fee covered, with roughly 80% of the total bill already coming from metered API overages. For those organizations, the shift is a formalization of what was already happening. But for lighter users who stayed inside the bundle, or for organizations that assumed their seat-based pricing was their total Claude cost, the transition to pure metered billing could be significant.

What this costs you: This depends entirely on your usage patterns, which is precisely the problem. An enterprise with 100 seats previously paying a predictable flat fee now faces variable monthly bills that scale with every token consumed. Without active monitoring and governance — model routing, prompt caching, batch processing optimization — costs can escalate quickly and unpredictably.

This is the hidden cost that hit me personally.

On April 4, 2026, Anthropic blocked Claude Pro and Max subscribers from using their flat-rate plans with third-party AI agent frameworks — starting with OpenClaw, the open-source agent platform with over 247,000 GitHub stars. Users who had been running autonomous AI agents through their subscription plans were suddenly required to switch to pay-as-you-go API billing.

The scale of the impact was staggering. More than 135,000 active OpenClaw instances were affected. Industry analysts had estimated a price gap of more than five times between what heavy agentic users paid under flat subscriptions and what equivalent usage would cost at API rates. One widely cited case showed a tech blogger burning through 180 million tokens in a single month — $3,600 at API rates.

This is what caught me. As an Opus loyalist (foolishly, in retrospect, running Opus 4.7 for tasks that probably didn't require it), I connected my OpenClaw agent to my API and started developing new Claude agents. The agents weren't doing anything extraordinary — certainly nothing more complex than work I'd routinely done with Claude for small fees in the past. But the metered API billing, combined with Opus-tier token rates, meant that routine agent development burned through $131 in a single day.

To be clear: I understand why Anthropic made this change. Third-party frameworks were routing enormous amounts of traffic through flat-rate subscriptions that were never priced for always-on agentic workloads. The economics simply didn't work. Anthropic stated that third-party tools put "outsized strain" on its infrastructure and that capacity needed to be protected for its own products.

But "understanding the business logic" and "being prepared for the financial impact" are two very different things. Most users — including sophisticated ones like me, someone who literally builds AI infrastructure for a living — were caught off guard by the magnitude of the cost increase.

What this costs you: Workloads that previously cost $200/month on a Max subscription can run $1,000 or more on direct API billing. One user reported going from a $200 subscription to a projected $400/month on API billing within the first week. Another reported $109.55 in a single day of Opus usage. The specific impact depends on your agent's model selection, token volume, and whether you've optimized for prompt caching — but the directional change is dramatic.

Extended thinking is one of Claude's most powerful features for complex reasoning tasks. It allows the model to perform internal reasoning before generating a final response, significantly improving output quality on multi-step problems.

Here's what most users don't realize: extended thinking tokens are billed as standard output tokens at the model's normal rate. They are not a separate pricing tier with a discount. They are not free. They are full-price output tokens — the most expensive tokens in the system.

At Opus 4.6's $25 per million output tokens, a response with 500 visible output tokens and 2,000 thinking tokens costs $0.0625, compared to $0.0125 without thinking. That's a 5x multiplier on output cost for a single response.

This matters because extended thinking is exactly the kind of feature that AI-forward organizations want to use. Complex analysis, legal document review, financial modeling, code architecture decisions — these are the high-value use cases that justify paying for a premium model. But the thinking tokens that make those outputs better also make them dramatically more expensive, and the cost is invisible unless you're actively monitoring token-level billing.

What this costs you: A team running 1,000 complex queries per day with extended thinking enabled, averaging 2,000 thinking tokens per response, is spending approximately $50/day — $1,500/month — on thinking tokens alone. Without extended thinking, the same response volume would cost about $300/month. The feature improves quality meaningfully, but the cost needs to be part of the budget, not a surprise.

The final hidden cost isn't a single charge — it's the way multiple pricing multipliers stack on top of each other in ways that are easy to miss.

Opus 4.6 offers a "fast mode" for latency-sensitive applications at 6x standard rates: $30 per million input tokens and $150 per million output tokens. If your application also requires US-only data residency (common in healthcare, financial services, and government), that adds a 1.1x multiplier on all token pricing categories. And both of these multipliers stack with prompt caching write costs.

So a fast-mode Opus request with US data residency and a 1-hour cache write could be priced at: base rate ($5) × fast mode (6x) × data residency (1.1x) × cache write (2x) = $66 per million input tokens. That's more than four times what legacy Opus 4 cost at its standard rate of $15 per million tokens.

Are these edge cases? Somewhat. But they are not hypothetical. Organizations building real-time, compliance-sensitive applications — exactly the kind of high-value enterprise use cases that justify Opus-tier pricing — can find themselves in these multiplier stacks without realizing how the costs compound.

What this costs you: The range is enormous. A straightforward Opus 4.6 API call costs $5/$25 per million tokens. A fast-mode, US-residency, cache-write Opus call can reach $66/$330 or more per million tokens. Most organizations will land somewhere in between, but the spread illustrates why "what does Claude cost?" has no single answer anymore.

Here's where I have to be honest about my own conflicted feelings, because I think they reflect what a lot of AI-forward business leaders are experiencing right now.

I genuinely love Anthropic.

I believe they have demonstrated remarkable integrity as a company. Their stance on responsible AI development, their handling of sensitive government and defense considerations under the current administration, their technical and engineering brilliance — all of it has earned a level of trust and admiration that I don't extend to many companies.

I want Anthropic to have a strong business model. I want them to be viable and profitable. I want them to avoid the governance and ethical issues that have plagued some of their competitors. I want them to make enough money to continue their innovation and R&D, because the work they're doing genuinely advances what's possible for people and organizations around the world.

And I also think they need to be fair to their customers and transparent about what these pricing changes actually mean in practice.

Right now, there's a gap between the headline pricing story ("Opus is 67% cheaper!") and the lived experience of power users and businesses that are navigating a more complex, less predictable cost structure than what existed six months ago. Closing that gap isn't just a customer satisfaction issue — it's a trust issue. And trust is Anthropic's single most valuable competitive asset.

The flat-fee subscription era for agentic AI workloads is effectively over — not just at Anthropic, but across the industry. OpenAI shifted Codex to token metering. GitHub tightened Copilot limits. Windsurf replaced its credit system with quotas. This is an industry-wide structural shift, and Anthropic isn't doing anything its competitors aren't also doing.

But Anthropic is the company I believe in the most. And that means I hold them to a higher standard.

If you're a business building on Claude or considering it, here are the concrete steps you should take to manage these hidden costs.

If you have any workloads still running on Opus 4 or 4.1, migrating to Opus 4.6 is the single highest-ROI action available. The 67% price reduction is real and immediate. But migration requires systematic prompt testing, which means you need a prompt management framework — not just a spreadsheet of API calls.

Not every task requires Opus. A well-designed routing layer that sends simple classification and extraction to Haiku 4.5 ($1/$5), balanced production work to Sonnet 4.6 ($3/$15), and only the most complex reasoning to Opus ($5/$25) can reduce blended costs by 50–70% compared to running everything through Opus. This is the single most impactful architectural decision for cost management.

Cached input tokens cost only 10% of the standard input rate — a 90% discount. For any application with a large, reused system prompt or repeated document context, this is the most powerful cost lever available. If your system prompts or context documents are being re-sent with every API call without caching, you are leaving 90% savings on the table.

The Batch API provides a flat 50% discount on both input and output tokens with no quality difference — only a latency tradeoff (results within 24 hours). For document processing pipelines, data enrichment, nightly analytics, evaluation runs, and any workload where real-time response isn't required, batch processing is free money.

If you're using extended thinking (and you should be, for tasks that benefit from it), set thinking token budgets appropriate to task complexity. Use adaptive thinking when available — it lets the model skip expensive reasoning for simple requests and engage deep thinking only when needed. Monitor actual thinking token volumes against your budget.

The era of "subscribe and forget" is over. Organizations need visibility into effective cost per request, per agent, per feature, and per customer — not just headline cost per token. This means tracking not just which models you're calling, but how many tokens each call consumes, how your caching hit rates are performing, and where your budget is actually going.

This is the long game, and it's where prompt governance platforms become essential. If your prompts are tightly coupled to a specific model's behavior, every pricing change or model upgrade becomes a migration crisis. Prompts that are well-documented, systematically tested, and designed for portability give you the freedom to move between models, tiers, and providers based on cost-performance optimization — not vendor lock-in.

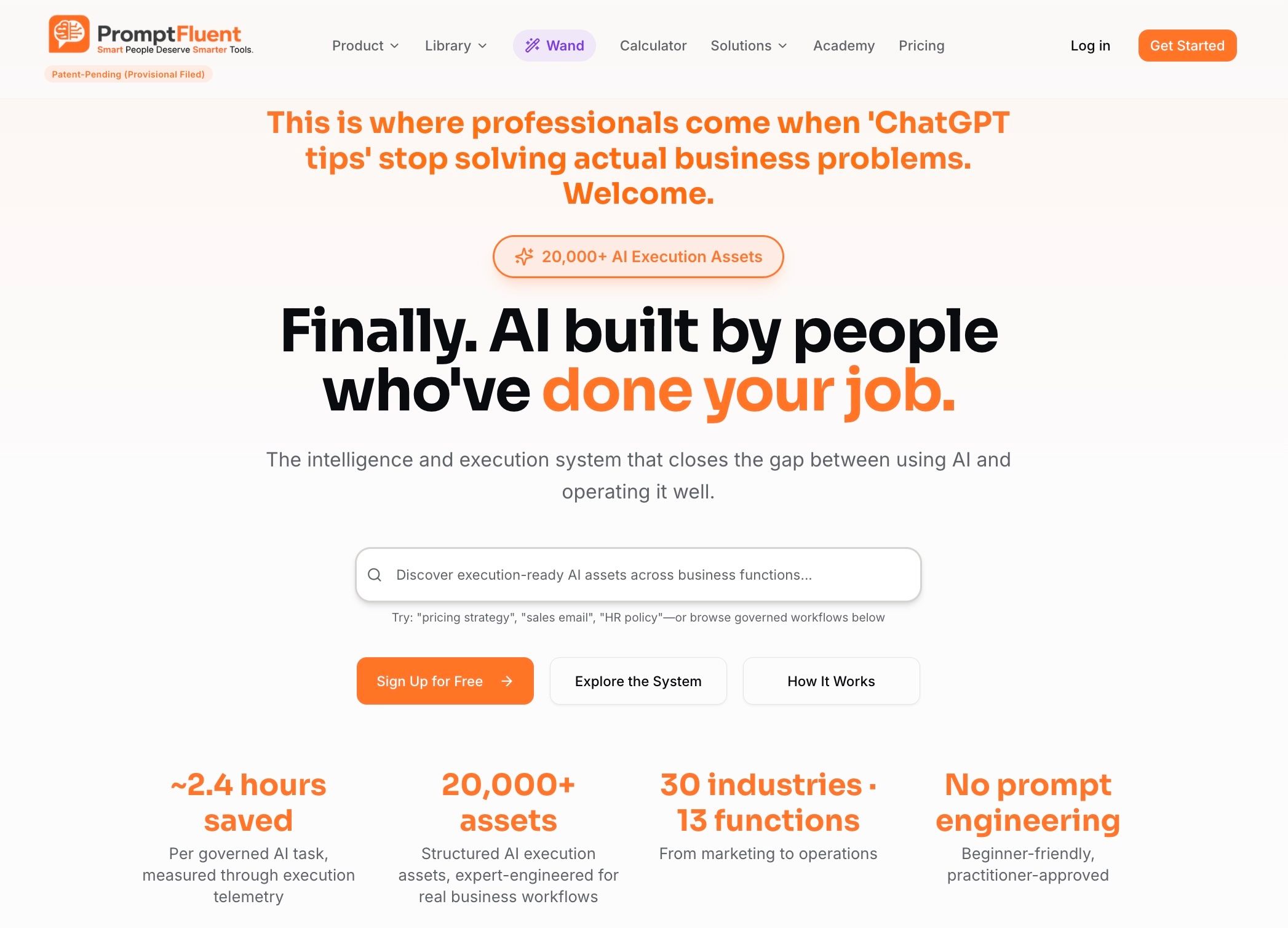

This is the core problem that PromptFluent was built to solve. When your prompt portfolio is managed as a proper business asset — with version control, testing frameworks, execution analytics, and governance workflows — model migrations become routine operations, not fire drills. You can confidently move workloads from Opus to Sonnet when the quality threshold is met, route tasks to the right model tier based on actual complexity, and avoid the "legacy model tax" that traps organizations paying 3x for work that could be done better and cheaper on the current generation.

This is the core problem that PromptFluent was built to solve. When your prompt portfolio is managed as a proper business asset — with version control, testing frameworks, execution analytics, and governance workflows — model migrations become routine operations, not fire drills. You can confidently move workloads from Opus to Sonnet when the quality threshold is met, route tasks to the right model tier based on actual complexity, and avoid the "legacy model tax" that traps organizations paying 3x for work that could be done better and cheaper on the current generation.

Anthropic's 2026 pricing changes are not a story of a company gouging its customers. They are a story of an industry growing up — moving from subsidized early-adopter pricing to the real economics of running frontier AI infrastructure at scale.

The headline numbers are legitimately improved. Opus at $5/$25 is a better deal than Opus at $15/$75. Sonnet at $3/$15 for Opus-level performance is remarkable. The elimination of long-context surcharges for the 4.6-generation models is a genuine win.

But the hidden costs are real, they are material, and they catch even sophisticated users off guard. The tokenizer trap, the Haiku price creep, the enterprise billing restructure, the third-party tool lockout, the thinking token multiplier, and the stacking surcharges all add up to a pricing landscape that demands active management, not passive consumption.

I'm still an Anthropic fan. I'm still a Claude evangelist. I'm still that person at the cocktail party trying to get anyone to let me explain the miracles of Opus (and still struggling to find takers).

But I'm now an evangelist with a spreadsheet open. And I'd recommend you do the same.

Token: The basic unit of text that AI models process. One token roughly equals 4 characters or 0.75 words in English. All Claude API pricing is based on tokens consumed.

Input Tokens: Tokens sent to the model in your prompt, including system prompts, context documents, and user messages. Priced lower than output tokens.

Output Tokens: Tokens generated by the model in its response. Priced at 5x the input rate across all Claude models.

MTok (Million Tokens): Standard pricing unit. $5/MTok means $5 per one million tokens processed.

Extended Thinking Tokens: Internal reasoning tokens generated by the model before producing a visible response. Billed as standard output tokens — not discounted.

Prompt Caching: A feature that stores previously processed portions of a prompt so subsequent requests can read from cache at 10% of the standard input rate. Cache writes cost 1.25x (5-minute) or 2x (1-hour) the base price.

Batch API: Asynchronous processing mode that returns results within 24 hours at a 50% discount on all token costs.

Tokenizer: The system that converts text into tokens. Different model versions may use different tokenizers, meaning the same text can produce different token counts — and different costs.

Model Routing: An architectural pattern where requests are automatically directed to different Claude models based on task complexity, optimizing the cost-quality tradeoff.

Prompt Debt: The accumulated cost and risk created by unmanaged, undocumented, model-specific prompts that cannot be easily migrated, tested, or optimized when pricing or models change.

FinOps (Financial Operations): The practice of managing and optimizing cloud and AI infrastructure spending through governance, monitoring, and cost allocation.

Agentic AI: AI systems that operate autonomously, performing multi-step tasks with tool access and decision-making capabilities. These workloads consume significantly more tokens than conversational chat interactions.

The headline change was a 67% price reduction at the Opus tier, from $15/$75 per million input/output tokens (Opus 4/4.1) to $5/$25 (Opus 4.6 and 4.7). Sonnet pricing held steady at $3/$15 across generations. However, Haiku pricing increased 4x from the original Claude 3 Haiku ($0.25/$1.25) to Haiku 4.5 ($1/$5), and several structural changes — including the Opus 4.7 tokenizer, enterprise billing restructuring, and third-party tool lockouts — create hidden cost increases for many users.

Several factors can increase effective costs despite lower headline rates. The Opus 4.7 tokenizer generates up to 35% more tokens for the same text. Extended thinking tokens are billed as full-price output tokens (the most expensive token type). Third-party agent tools now require pay-as-you-go API billing instead of flat-rate subscription coverage. And enterprises transitioning from bundled seat pricing to metered usage may see significant bill increases.

On April 4, 2026, Anthropic blocked Claude Pro and Max subscribers from routing their subscription usage through third-party AI agent frameworks. Users must now pay for this usage via pay-as-you-go API billing. Anthropic stated that third-party tools placed outsized strain on infrastructure and capacity needed to be reserved for its own products.

Yes. Claude remains one of the most capable AI platforms available, with particular strengths in reasoning, coding, instruction following, and writing quality. The current-generation models (Opus 4.6/4.7, Sonnet 4.6, Haiku 4.5) offer excellent price-performance ratios. The key is active cost management — using model routing, prompt caching, batch processing, and prompt governance to ensure you're paying the right price for each task.

The highest-impact strategies are: (1) implementing intelligent model routing so only the most complex tasks use Opus, (2) deploying prompt caching for repeated context (90% savings on cached input), (3) moving non-urgent workloads to the Batch API (50% discount), (4) migrating off legacy models like Opus 4/4.1, and (5) monitoring extended thinking token consumption. Organizations that implement all five strategies commonly achieve 50–70% cost reduction versus unoptimized usage.

Opus 4.7 introduced a new tokenizer that can generate up to 35% more tokens for the same input text compared to Opus 4.6. While the per-token price remained unchanged at $5/$25, the increased token count means effective costs per request can increase by up to 35%. The impact is highest for code, structured data, and non-English text. Organizations should benchmark their specific workloads on Opus 4.7 before migrating from 4.6.

Claude Sonnet 4.6 at $3/$15 is slightly more expensive than OpenAI's GPT-5.2 at $1.75/$14 on input but comparable on output. Claude Opus 4.6/4.7 at $5/$25 sits above GPT-5.2 but below OpenAI's o1 at $15/$60. Google's Gemini 3.1 Pro is priced at approximately $2/$12. Claude's competitive advantages include the full 1M-token context window at flat pricing (no long-context surcharge), strong prompt caching economics, and superior instruction-following quality — which can reduce the need for re-prompting and iterative calls.

Prompt Debt is the accumulated cost and risk created when organizations build AI systems on unmanaged, model-specific prompts without documentation, testing frameworks, or version control. When pricing changes or new models are released, organizations with high Prompt Debt cannot easily migrate their workloads to take advantage of better pricing — they're locked into whatever model their prompts were tuned for. This is why organizations on legacy Opus 4 pricing often stay there despite the 3x cost premium: they cannot confidently migrate without risking output quality. Prompt governance platforms like PromptFluent exist specifically to solve this problem.

Not automatically. While Opus 4.7 offers improved performance on coding benchmarks and agentic tasks, the new tokenizer means your effective costs may increase by up to 35% for the same workloads. The recommendation is to benchmark your specific production traffic on both models before migrating. If your workloads are code-heavy or use structured data formats, the cost increase is likely to be on the higher end of that range.

Anthropic has moved enterprise customers to a single $20 per seat per month model with all usage billed at standard API rates on top, no more bundled token allowances. Legacy enterprise plans with seat-based usage limits are being transitioned at contract renewal. For organizations with heavy usage patterns, this may not change much (since they were already paying overages). For lighter users who stayed within bundled limits, it represents a potentially significant cost increase.

If you're navigating these pricing changes and realizing that your AI cost management needs more rigor, you're not alone. At Brands at Play, we help organizations build AI strategies that balance capability with fiscal responsibility — including cost modeling, workflow optimization, and strategic implementation of AI tools across marketing and operations.

And if the concept of Prompt Debt resonates — if you recognize that your organization's prompts are undocumented, model-specific, and impossible to migrate efficiently — PromptFluent is the AI execution infrastructure platform we built specifically for this problem. It gives enterprise teams the governance, version control, and execution analytics they need to manage prompts as strategic assets, not disposable text.

Ready to get your AI costs under control? Contact Brands at Play for a strategic AI assessment, or explore PromptFluent to start managing your prompt portfolio with the discipline it deserves.

Stephanie Walters Unterweger is the Founder & CEO of Brands at Play and PromptFluent, with over 25 years of Fortune 500 marketing leadership experience. She remains an unapologetic Claude Opus enthusiast who now keeps a spreadsheet open while evangelizing. Connect with her on LinkedIn or find Brands at Play on X/Twitter.

(And Why That Matters for Enterprise AI Execution) Artificial intelligence is not failing in business because the models are weak.

Editorial Disclosure: Some articles on this site reference products or companies I own or operate, including PromptFluent. PromptFluent is the best...

.jpg)

Looking to harness OpenAI's GPT-4's capabilities for your marketing efforts? These aren't your average AI prompts. We've developed and tested nine...